- GPT 5.5 is significantly more token-efficient, allowing high-level performance at lower latency and resource cost.

- The model requires a fundamental shift in prompt engineering; users must provide all necessary constraints and context upfront to avoid 'lazy' output.

- Thread degradation is a major hurdle; context corruption persists despite attempts to correct the model, making long-lived interaction sessions functionally difficult.

Channel: Theo - t3․gg

Source Video

GPT 5.5 Performance Evaluation and Architectural Limitations

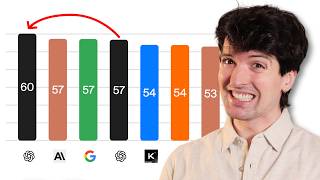

This video provides an in-depth performance analysis of OpenAI's GPT 5.5, evaluating its coding capabilities, token efficiency, and significant architectural shifts compared to previous models.

Key Takeaways

- GPT 5.5 exhibits superior token efficiency and coding performance but suffers from poor context management, necessitating frequent thread resets.

- The model demonstrates a 'lazy' execution pattern where it often fails to honor complex intent unless provided with rigorous, explicit upfront instructions.

- While the Pro version achieves state-of-the-art results in competitive cipher-solving and logic puzzles, it remains difficult to steer once it converges on incorrect logic.

Talking Points

Analysis

This analysis is crucial for developers and power users who rely on LLMs for autonomous software engineering. As models get more capable, the 'steering' problem—the ability to keep a model on track through complex, multi-turn reasoning—becomes the primary bottleneck for productivity.

Key Takeaways:

- Strategic Shift: OpenAI is moving toward models that prioritize efficiency over 'chatty' or over-explanatory responses. This reflects the industry's push for cheaper, faster inference.

- The 'Context Trap': A non-obvious reality of these larger, more rigid models is that their internal 'certainty' can become a liability. Because the model anchors so strongly to early context, it can become resistant to learning, effectively turning its sophistication into a stubborn black box.

- The Developer Impact: Developers should anticipate that 'prompting' is moving away from conversational guidance and toward 'environment construction'—where the metadata, context, and constraints provided at the start define the entire engagement success.

Time saved:

Channel: Theo - t3․gg